| On this page |

Overview ¶

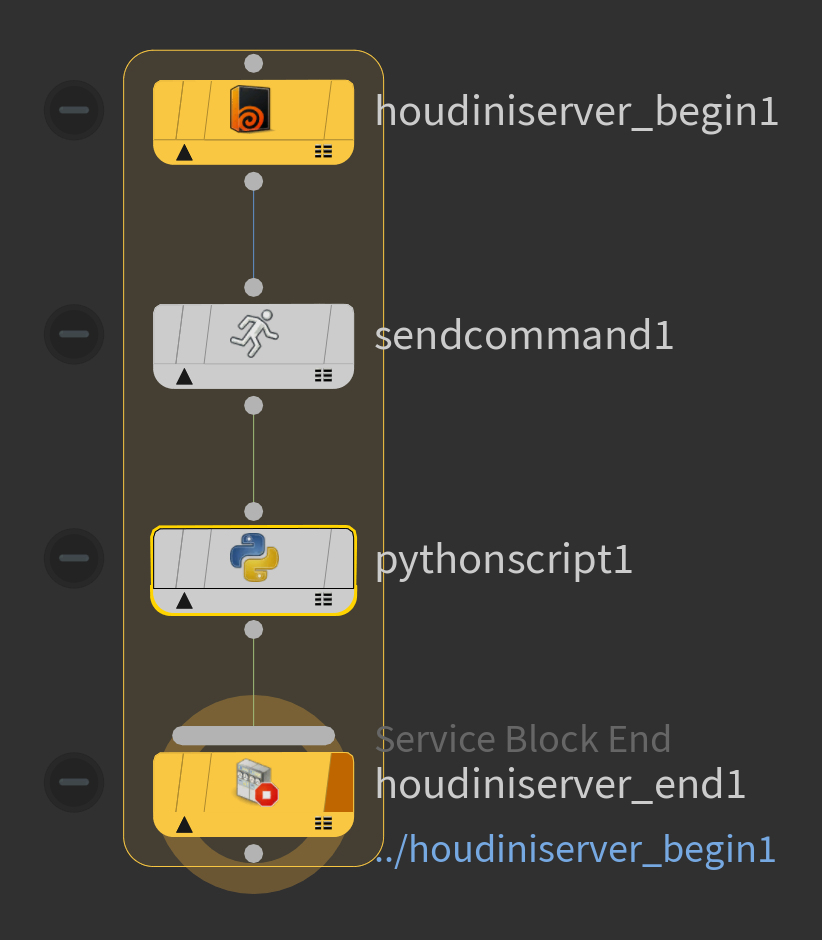

Service blocks combine PDG services with feedback loops. A service block creates one or more loops with a fixed number of iterations and assigns a service worker process to that loop. The service process is locked to the loop and won’t be used by other work items in the graph until the loop has completed. Iterations within the loop cook sequentially in depth-first order, starting from the Begin node and cooking down to the End node.

You can configure compatible nodes within the block to execute on the service process assigned to the loop by changing their cook type to Service, and enabling the Run on Service Block parameter. The Command Send TOP node always runs on the containing service block. Other nodes such as

Attribute Create or

File Pattern executes normally, but their work items still follows the depth-first cook order imposed by the loop.

PDG includes several built-in service blocks

How to ¶

Create a Service Block ¶

-

In a TOP network editor, press ⇥ Tab and choose a service block tool, such as

Maya Service Block TOP or

Houdini Service Block TOP.

The tool puts down a Block Begin node and a Service Block End node

-

Select the Begin node. In the parameter editor, choose how to specify the number of sessions:

-

The number of sessions determines how many work items each loop in the block should have. The default is to run the number of iterations specified in the Number of sessions parameter. If the Begin node has upstream items, the block creates a loop of size ‹session_count› for each incoming work item.

-

You can turn on Session count from upstream items to create a single loop, with one iterations for each upstream items.

-

Multiple sessions from the same loop are cooked serially: the block cooks the first session from top to bottom before starting the second session.

-

Sessions from different loops can run in parallel.

-

-

-

Connect

Command Send nodes between the start and end nodes in their input and output to make them part of the loop.

-

The Command Send node is able to send script code to the service process.

-

-

Depending on which type of service block, it’s possible to run other service-based nodes in the block.

-

For example, if the block uses the Houdini service you can include an

HDA Processor or

ROP Fetch inside the block, and configure it to run on the shared service process.

-

Other nodes that can’t run using the service can be included, and uses the normal scheduling workflow.

-

Houdini draws a border around the nodes in the block to help you visualize it.

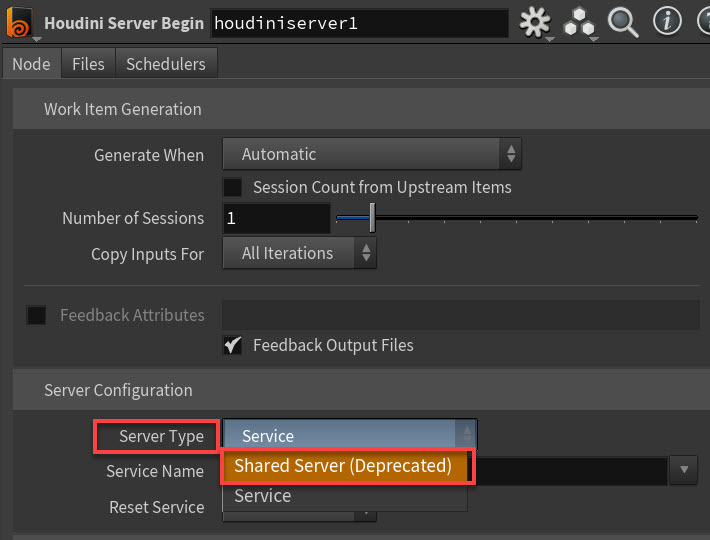

Shared Servers (Deprecated) ¶

Notes ¶

-

The Begin node generates work items that lock a service worker process to the block. This ensures no other work items in the graph can interfere with the operations performed by the block.

-

The service worker is unlocked once the last End node work item for the block evaluates. If the graph is canceled or stops cooking before that point, all locked service processes unlocked.

-

You can set the Reset Service parameter on the Begin node to ensure that the state of the service process is reset at the beginning of each loop iteration.

-

The state of each service process can be inspected using the PDG Services list.

-

You should color the start and end nodes of a block the same to make their relationship clear. The default nodes put down by the built-in service block tools have different colors. You can change the node colors. This is useful to distinguish nested loops.

The border around the block takes on the color of the end node.

Examples ¶

The following directories contain example HIP files demonstrating how to use Houdini and Maya service blocks.

-

$HFS/houdini/help/files/pdg_examples/top_houdinipipeline -

$HFS/houdini/help/files/pdg_examples/top_mayapipeline