Hmm, thanks. In our case, the ifd's themselves are small - we do already use packed primitives for large static geometry. The costly intermediates are surfaces with procedural displacement, constructed on the fly in a shader derived from on-disk texture images.

On a lark, I tried gluing two separate .ifd's together with a text editor:

the header of one of them,

then all the “Retained geometry” entries from both files,

then the “Main” section from one of them down to the ray_raytrace,

then the “Main” from the other one,

and another ray_raytrace.

Result: No error reports. It created both images, and they were different! The first of them might have been correct… the second was clearly wrong. So at least some settings from the first image were being inherited by the second when I didn't want them to be.

The .ifd file format description has some discussion of scope of settings, so maybe the undesired inheritance could be fixed, but I'm kinda shooting in the dark.

Found 8 posts.

Search results Show results as topic list.

Technical Discussion » Making .ifd's that render multiple /out nodes?

-

- stuartnlevy

- 8 posts

- Offline

Technical Discussion » Making .ifd's that render multiple /out nodes?

-

- stuartnlevy

- 8 posts

- Offline

I tried tying multiple ifd-output nodes as input to a Merge ROP. Rendering that Merge ROP just triggers each of the dependent ROPs to write its own, separate ifd file.

Technical Discussion » Making .ifd's that render multiple /out nodes?

-

- stuartnlevy

- 8 posts

- Offline

Is there a way to write .ifd's that, when rendered, will create images for multiple /out nodes in one mantra invocation?

We have some scenes that generate fairly expensive intermediate geometry. For each animation frame, we need to create multiple several /out images that depend on those same intermediates.

Ideally we'd invoke mantra once per frame, and feed it an .ifd that tells it to make the intermediate stuff internally and render all the associated outputs.

But we've been generating .ifd files by setting up an /out node to create ifd's and then invoking ropnode.render(). It creates a self-contained ifd which renders just that frame.

Can we make a single ROP node (merge??) that depends on several other ROPs, such that <overall_rop>.render() creates a single .ifd that does the whole job?

Or: is there a safe text-processing way to merge multiple, separately-created .ifd files into a single one that creates the multiple related images? I might be able to parse the generated .ifd's well enough, but would prefer a less hack-y way.

We have some scenes that generate fairly expensive intermediate geometry. For each animation frame, we need to create multiple several /out images that depend on those same intermediates.

Ideally we'd invoke mantra once per frame, and feed it an .ifd that tells it to make the intermediate stuff internally and render all the associated outputs.

But we've been generating .ifd files by setting up an /out node to create ifd's and then invoking ropnode.render(). It creates a self-contained ifd which renders just that frame.

Can we make a single ROP node (merge??) that depends on several other ROPs, such that <overall_rop>.render() creates a single .ifd that does the whole job?

Or: is there a safe text-processing way to merge multiple, separately-created .ifd files into a single one that creates the multiple related images? I might be able to parse the generated .ifd's well enough, but would prefer a less hack-y way.

Edited by stuartnlevy - Sept. 17, 2020 15:55:45

Technical Discussion » HQueue security / access control?

-

- stuartnlevy

- 8 posts

- Offline

Followup: I talked with SideFX support. They may eventually release a version of HQueue which does have some sort of access control, but for now, it doesn't have any.

If you use HQueue, please beware: anyone on the network who can connect to TCP port 5000 on your HQueue server can submit jobs to your render farm. And those jobs can run any command, not just Houdini scene processing.

So, make sure your HQueue server's port 5000 is (at least) not accessible to the general Internet! It is handy to be able to check the status of jobs, etc. from anywhere - it's not obvious that this is also risky.

I urged SideFX to at least warn people at installation time about the need for firewalling the server. They've filed RFE #89564 to add such a warning. Thank you, support people!

If you use HQueue, please beware: anyone on the network who can connect to TCP port 5000 on your HQueue server can submit jobs to your render farm. And those jobs can run any command, not just Houdini scene processing.

So, make sure your HQueue server's port 5000 is (at least) not accessible to the general Internet! It is handy to be able to check the status of jobs, etc. from anywhere - it's not obvious that this is also risky.

I urged SideFX to at least warn people at installation time about the need for firewalling the server. They've filed RFE #89564 to add such a warning. Thank you, support people!

Technical Discussion » HQueue security / access control?

-

- stuartnlevy

- 8 posts

- Offline

We're trying out HQueue and admiring its job management features.

However, I'm wondering about access control and security. It looks as though anything that can connect to the server's port 5000 can submit an arbitrary shell command job. Though it runs as hquser, it would have access to lots of things.

Of course we can use a firewall to limit access to the server, but this still makes me very uneasy. Before this, we'd been using an ssh-based homegrown job distribution setup – much less capable than HQueue, but we knew that a job could only be run by someone using one of our login accounts, rather than by anyone attached to our network.

Am I missing something? Is there provision for enabling some safe user-based access control for HQueue?

However, I'm wondering about access control and security. It looks as though anything that can connect to the server's port 5000 can submit an arbitrary shell command job. Though it runs as hquser, it would have access to lots of things.

Of course we can use a firewall to limit access to the server, but this still makes me very uneasy. Before this, we'd been using an ssh-based homegrown job distribution setup – much less capable than HQueue, but we knew that a job could only be run by someone using one of our login accounts, rather than by anyone attached to our network.

Am I missing something? Is there provision for enabling some safe user-based access control for HQueue?

Technical Discussion » Houdini 15.0 vs OpenVDB: can a plugin use a different OpenVDB version than Houdini's libopenvdb_sesi?

-

- stuartnlevy

- 8 posts

- Offline

Hello,

We're using Houdini 15.0.something. Internally it seems to use OpenVDB 3.0.0 (“v3_0_0_sesi”), judging from the symbol names in dsolib/libopenvdb_sesi.so and toolkit/include/openvdb/*.

We have a VDB-related HDK plugin, and would like to use some features included in a later OpenVDB release - 3.2.0. So, two questions:

(a) Can we even link the two together? It seems like there's a good chance that the symbol names won't collide, since the libopenvdb_sesi names are fully qualified (e.g. “openvdb::v3_0_0_sesi::initialize()”).

(b) Ultimately we'd want to create a v3.2.0 VDB grid and somehow turn it into a v3.0.0 VDB grid. I'm guessing that should be possible by iterating over the 3.2 source grid and copying values into the 3.0 destination grid, at the cost of needing twice as much memory.

I had hoped for awhile that we might depend on ABI compatibility within the v3.x line of OpenVDB and somehow magically be able to substitute a v3.2.0 library in place of the built-in Houdini v3.0.0_sesi library. But no, ABI compatibility is only promised to work for Grid and Transform methods, not the whole suite. So I expect we'd have to do (b) above. Right?

We're using Houdini 15.0.something. Internally it seems to use OpenVDB 3.0.0 (“v3_0_0_sesi”), judging from the symbol names in dsolib/libopenvdb_sesi.so and toolkit/include/openvdb/*.

We have a VDB-related HDK plugin, and would like to use some features included in a later OpenVDB release - 3.2.0. So, two questions:

(a) Can we even link the two together? It seems like there's a good chance that the symbol names won't collide, since the libopenvdb_sesi names are fully qualified (e.g. “openvdb::v3_0_0_sesi::initialize()”).

(b) Ultimately we'd want to create a v3.2.0 VDB grid and somehow turn it into a v3.0.0 VDB grid. I'm guessing that should be possible by iterating over the 3.2 source grid and copying values into the 3.0 destination grid, at the cost of needing twice as much memory.

I had hoped for awhile that we might depend on ABI compatibility within the v3.x line of OpenVDB and somehow magically be able to substitute a v3.2.0 library in place of the built-in Houdini v3.0.0_sesi library. But no, ABI compatibility is only promised to work for Grid and Transform methods, not the whole suite. So I expect we'd have to do (b) above. Right?

Edited by stuartnlevy - Dec. 12, 2016 15:14:21

Technical Discussion » Un-cooked nodes? SOP works properly in GUI render, doesn't in batch render

-

- stuartnlevy

- 8 posts

- Offline

We have a scene with an Attribute VOP SOP node, which has an expression call to a CHOP network. The expression needs to know a “timestep” value related to the current frame, as read from an external file, and uses that timestep to process point positions in a time-dependent way. There are expressions like:

chopf(“../chopnet1/rename1/currentTime”,ch(“../TIME_CONTROL/timestep”)+$F*0)

ch(“../TIME_CONTROL/timestep”)*ch(“../TIME_CONTROL/duration”)) / ch(“../TIME_CONTROL/duration”)

The whole thing works perfectly when rendered interactively in the Houdini GUI. You set the time to something, press Render, all the processing happens as intended.

However, when we do batch rendering, and say (in python) something like

ropnode = hou.node('/out/whatever_ROP')

ropnode.render( frame_range = (frameno,frameno,1), method=hou.renderMethod.FrameByFrame, ignore_inputs=True)

… then it doesn't work. The AttribVOP node somehow uses the wrong timestep value. We've tried also printing out that same timestep value in a pre-frame script. The printed value is correct but the node uses some other value, and does the wrong thing.

The mysterious “+$F*0” was an attempt to flag the expression as frame-dependent, but it didn't help.

Workaround-which-may-give-a-clue:

If in the batch pipeline we force the AttribVOP node to cook(force=True) before the render, then it uses the correct value - everything goes as it should.

If we just invite the AttribVOP to cook, using node.cook(force=False), then it doesn't work - we get the wrong values again. Yet node.needsToCook() returns True.

Can anyone guess why the AttribVOP node needs to be cooked, but isn't being cooked automatically? We don't need to hand-cook nodes in any other circumstance when using this batch rendering pipeline.

chopf(“../chopnet1/rename1/currentTime”,ch(“../TIME_CONTROL/timestep”)+$F*0)

ch(“../TIME_CONTROL/timestep”)*ch(“../TIME_CONTROL/duration”)) / ch(“../TIME_CONTROL/duration”)

The whole thing works perfectly when rendered interactively in the Houdini GUI. You set the time to something, press Render, all the processing happens as intended.

However, when we do batch rendering, and say (in python) something like

ropnode = hou.node('/out/whatever_ROP')

ropnode.render( frame_range = (frameno,frameno,1), method=hou.renderMethod.FrameByFrame, ignore_inputs=True)

… then it doesn't work. The AttribVOP node somehow uses the wrong timestep value. We've tried also printing out that same timestep value in a pre-frame script. The printed value is correct but the node uses some other value, and does the wrong thing.

The mysterious “+$F*0” was an attempt to flag the expression as frame-dependent, but it didn't help.

Workaround-which-may-give-a-clue:

If in the batch pipeline we force the AttribVOP node to cook(force=True) before the render, then it uses the correct value - everything goes as it should.

If we just invite the AttribVOP to cook, using node.cook(force=False), then it doesn't work - we get the wrong values again. Yet node.needsToCook() returns True.

Can anyone guess why the AttribVOP node needs to be cooked, but isn't being cooked automatically? We don't need to hand-cook nodes in any other circumstance when using this batch rendering pipeline.

Edited by stuartnlevy - Aug. 26, 2016 18:26:20

Houdini Indie and Apprentice » Houdini Apprentice won't start

-

- stuartnlevy

- 8 posts

- Offline

Enivob

When you install Apprentice, don't install the license server, choose the other option (which is for free usage).

Also, on OSX, the very first time you run Houdini your OSX user account must have administrator privileges or the license files can not be written to the user name correctly.

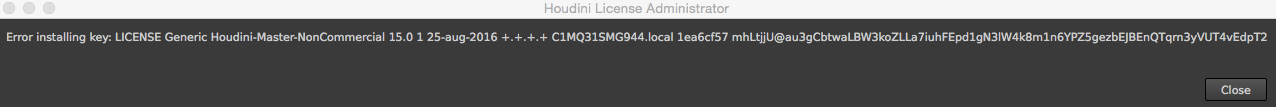

This is incredibly helpful! We didn't know about the admin-privileges requirement when the License Administrator is installing a license. If you don't have that privilege, it gives a completely un-helpful error report , like this:

Once installed, the license is usable from any account on that machine.

We're trying to teach a class involving Houdini, and some of the students had mysterious problems. This looks to be at least one of the causes. Enivob, you are a lifesaver.

-

- Quick Links